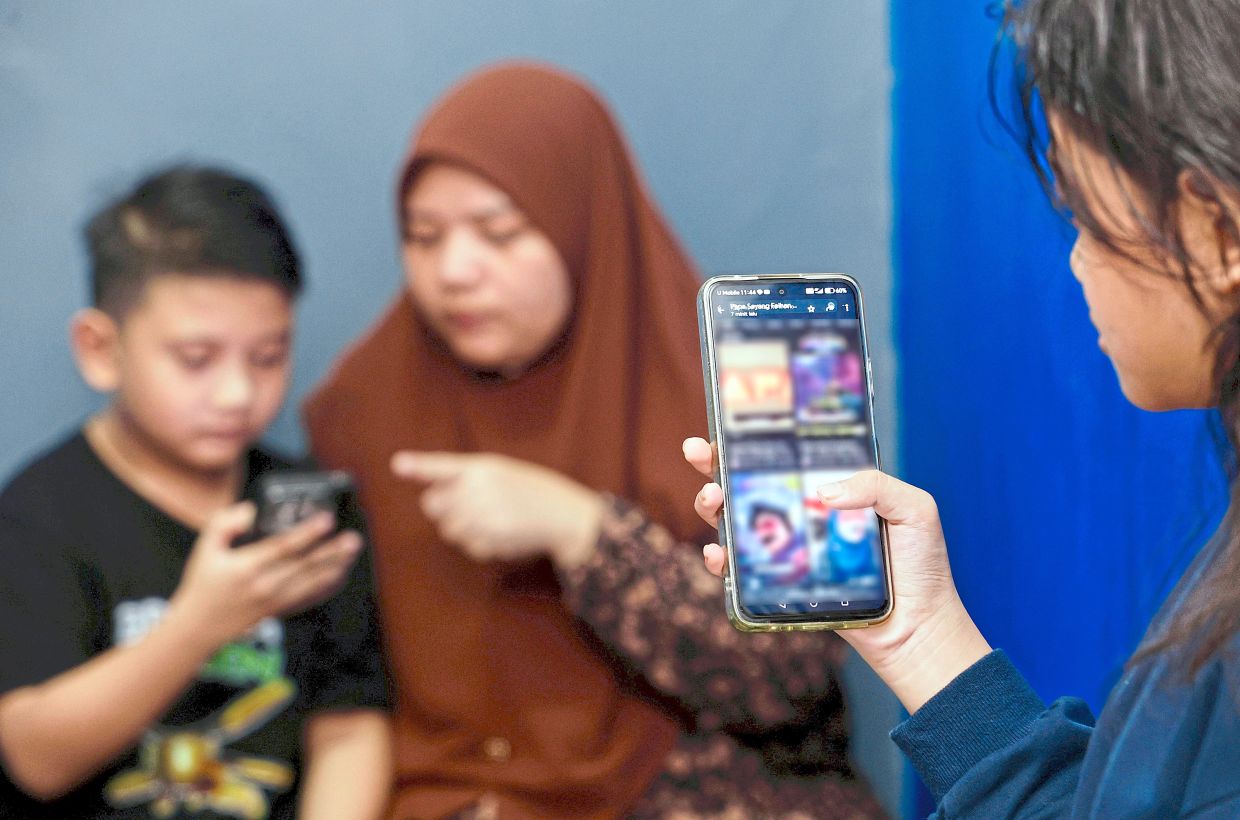

THE dinner table used to be a place for interaction and conversation, where families convened to talk about their day and spend quality time together.

Nowadays, the glow of the screen has replaced the warmth of the gaze, as parents and children – and sometimes even grandparents – have their eyes fixed on their individual screens.

This isn’t just a main fixture at the dinner table either, as individuals and families alike are consistently glued to their screens throughout the day, be it for work or entertainment.

More often than not, the first and last thing we do during the day is check our phones, as the hum of notifications has become the soundtrack of modern childhood.

But, as the Online Safety Act (ONSA) 2025 enters its first year of enforcement, headlined by the proposed social media ban for children under-16, it highlights that legislation and software are only as strong as the hands that hold the device.

While the new law places the onus on tech giants to verify ages and scrub harmful content, the core of the issue remains clear: parental controls are a vital safety net, but they are not a substitute for digital literacy.

The reality is that, without proper digital literacy, even adults are susceptible to online risks.

‘Digital babysitter’ myth

For many parents, the tablet has become the “perfect” 21st-century pacifier.

Providing a child with a smartphone or tablet is an easy way to keep children occupied during car rides or while dinner is being prepared.

This, however, has created a false sense of security as the digital world is full of real-world threats.

Mother-of-two Siti Nur Azhani Abdul Samat argued that the danger lies in how children process what they see.

“As adults, we know if something is just a silly joke or satire, and we dismiss it. But children interpret it differently – we don’t know if they are taking it literally,” she said.

“You think they are just watching regular cartoons or anime, but it could contain overly sexualised imagery or violent content, and they are consuming it without even realising what it is, and the impact it can have on their minds.”

ONSA is designed to make the logged-in experience safer for minors, but it cannot regulate the logged-out reality of a child alone in their bedroom with an unmonitored screen.

Prohibiting access to a platform is one thing, and managing the social pressure that leads a child to seek out alternative, less regulated corners of the web is another.

Azhani, whose children are aged eight and 11, shared that despite her cutting off online access at home, the digital babysitter culture of other parents still affects her child.

“Even though I don’t let my kids have their own devices, it’s still hard to manage their exposure online because their classmates are allowed to have phones,” she said.

“There have been times when my 11-year-old has come to me saying he feels ‘left out’ by his peers. He said that other kids often make fun of him or call him stupid for not knowing certain ‘trends’.

“So, he comes home and searches for it online just to understand what they are talking about, without realising it could be something inappropriate.”

Why controls fail

Technologically, the battle between parents and children is often a lopsided one.

For every new restriction introduced, a dozen “how-to” videos appear on the web, showing kids how to bypass them.

From using virtual private networks (VPNs) to mask locations to simple factory resets or utilising “guest mode” browsing, tech-savvy children are often two steps ahead of their parents and the software designed to limit them.

Cybersecurity expert Fong Choong Fok, who is the executive chairman of LGMS Bhd, said that while parental controls are a lock on the door, they do not stop someone from climbing in through the window.

“Having ‘safe search’ mechanisms and parental control software may not be sufficient in this day and age because there are so many ways for minors to bypass these controls,” he said.

He added that even the most robust parental and technical controls struggle with:

> The encryption wall: Most apps cannot monitor end-to-end encrypted messages on platforms like WhatsApp, leaving a blind spot for potential grooming.

> Vanish modes: Features like Snapchat’s disappearing messages or Instagram’s “Vanish Mode” allow harmful interactions to happen and be erased before a parent or guardian ever checks the logs.

The risk of stranger contact also remains a primary concern. While a child might be banned from social media platforms like TikTok, Facebook and Instagram, they could still be active on gaming servers like Steam.

Without active supervision and proper digital education, these spaces can become breeding grounds for grooming, where predators build trust through shared interests in games like Roblox or Minecraft.

Fong added that while technological safeguards play an important role, the foundation of responsible digital behaviour ultimately lies in the values and guidance instilled at home.

“Essentially, it still boils down to family education. Many parents today are outsourcing their children to technologies – like tablets and smartphones – without instilling the right values and ethical concepts in their children,” he said.

“This is something that a lot of parents overlook. They need to take accountability when it comes to their children’s digital education – to create awareness on the ethical use of technologies, the responsibilities associated with usage and instil the right moral values rather than depending on technical controls.

“Because, if there is a way to ‘break’ or bypass controls, people will find it. So, we should not be dependent on it; we have to go back to the education aspect of digital use.”

From restriction to conversation

If technology alone cannot close the gap, the solution must lie elsewhere – at home.

Parents are encouraged to build a household culture where digital experiences are shared rather than hidden.

Active supervision does not mean hovering over a child’s shoulder 24/7, but rather keeping screens in common areas where digital life can be discussed openly rather than managed in secret.

Parents could foster this transparency by:

> Explaining the “why” over “no”: Instead of just banning the app or prohibiting use, parents are encouraged to explain the risks of data privacy and the reality of persuasive posts intended to hook young minds.

> Establishing “tech-free” zones: Designating times, such as meals or family outings, when everyone, including parents, puts their phones away.

By focusing on why certain content is harmful, parents could help their children develop an internal safety filter to protect themselves online.

Just like learning to look both ways before crossing the street, this cognitive development is far more valuable and durable than any software patch.

A team effort

ONSA is a major step forward in the nation’s bid to signal an end to the “lawless” era of social media.

However, the safety of the next generation cannot be outsourced solely to the platforms and the government.

In reality, it is a tripartite responsibility where platforms must design for safety by default, governments must enforce strict age-assurance standards, and parents and educators must remain the first line of defence.

While parental safety controls provide the barrier, only active parenting and guidance provide the bridge to a safer digital future.

In this context, the success of the online safety laws will not be measured solely by how many accounts were removed, but by how many meaningful conversations were started at home.