MALAYSIA must urgently redefine “duty of care” in the age of artificial intelligence (AI), as the nation’s growing dependence on technology capable of making decisions on its own could reshape society faster than governments and laws can keep up.

According to Digital Minister Gobind Singh Deo, AI governance can no longer be viewed purely through the lens of punishing wrongdoing after it happens.

Governments, developers and industries must begin building preventive safeguards into AI systems before they are deployed to the public, he stresses.

In law, the duty of care is "a legal obligation that requires a person or group to take reasonable steps to avoid causing harm to others".

“We are now talking about technology that has a mind of its own. In other words, you are essentially coming back to that original discussion, which is about technology that is able to think for itself, make a decision, implement that decision.

“... And that decision is going to have an impact on people around it, corporations around it, society as a whole, the economy, government,” Gobind tells The Star in an exclusive interview recently.

As he explains, the concept of duty of care historically evolved from human interaction and accountability. Communities create rules, governments institutionalise them into laws, and courts enforce consequences when individuals or corporations cause harm.

But AI, Gobind argues, presents a fundamentally different challenge because the “decision-maker” is no longer always a human being or a conventional corporate entity.

“If you can’t identify the personality or the person, so to speak, that is making that decision, then how do you take action against it?

“How do you enforce these rules? And how do you create a deterrent so that it thinks before it decides and it thinks again before it implements?” he points out.

It has been reported that the digital ministry is drafting an AI Governance Bill, which is expected to be tabled in Parliament this year, to address the increasingly complex technological threats.

Gobind had said that a strong and comprehensive legal framework is essential to regulate AI-generated content, safeguard information integrity and ensure the continued security of the country’s digital ecosystem.

‘Tech personality’

Previously, the Damansara Member of Parliament also spoke of duty of care in his keynote speech at the recent International Bar Association Conference in Singapore, stating that the concept now must be understood in a broader context and not merely as a rule applied to individuals, but as a structure that operates across systems.

“Because AI systems do not act in isolation. They learn, adapt, and evolve. Harm may not arise from a single act, but from a chain of interactions over time,” he elaborates.

Gobind notes that governments worldwide are now confronting the difficult question of whether existing legal structures are sufficient to govern AI systems that can independently generate outcomes affecting people’s lives.

He suggests that future legal frameworks may eventually need to recognise what he describes as a “tech personality”, an expanded legal concept acknowledging autonomous technological systems that increasingly participate in decision-making.

Still, he stresses that accountability cannot remain abstract and that responsibility must continue to lie with those building, deploying and managing AI systems, particularly when the technology is rolled out for public use.

That includes examining the entire AI lifecycle, from the data used to train large language models (LLMs) to safeguards preventing bias, misinformation and harmful outcomes.

“The question around whether or not people who are responsible for building the model now have a duty of care to make sure that they actually build something that’s fit for purpose.

“So again, we have to start thinking about what it is we want to achieve,” he says.

Gobind adds that the government recognises both the enormous benefits and serious risks posed by AI adoption.

“While AI could improve productivity, reduce costs and transform public services, poorly governed systems could also deepen inequality, spread harmful decisions at scale and erode trust.”

For that reason, governance should not rely on a single blanket approach.

Instead, Malaysia is studying a tiered model where different AI applications face different levels of oversight depending on their risks and societal impact, he shares.

Some lower-risk uses may only require standards and guidelines, while more sensitive sectors could need regulations or even legislation passed through Parliament.

“You can’t govern everything. You will have certain areas that have AI layers but which have lesser consequences, which don’t need legislation.

“Then you have another group which actually has more consequences but still does not need legislation, which means maybe regulations are enough.

“And then you have certain areas that are critical, which need legislation in order for governance,” Gobind says.

Awareness, access and adoption

The broader governance discussion, says Gobind, is linked to the government’s push toward an AI Nation 2030 agenda under the 13th Malaysia Plan, which aims to prepare Malaysia for widespread AI adoption by the end of the decade.

As he puts it, the government’s strategy revolves around three priorities: awareness, access and adoption.

“Firstly, Malaysians must understand both the opportunities and dangers associated with AI. People need to be aware that there is technology that can help them.

“They also need to be aware that this technology, whilst it can help them, poses risks that they must be aware about and they must also be prepared to deal with,” he says.

Secondly, citizens and businesses must have access to AI infrastructure and tools, including reliable digital systems, data governance frameworks and cybersecurity protections.

“Thirdly, the government wants to ensure actual adoption across industries so that Malaysians are not left behind as global economies rapidly integrate AI technologies.

“If we say to ourselves that that technology exists but we don’t want to adopt it or we cannot adopt it, then you’re going to have a situation whereby everyone else around you moves forward as they have adopted it and you fall far behind,” he says.

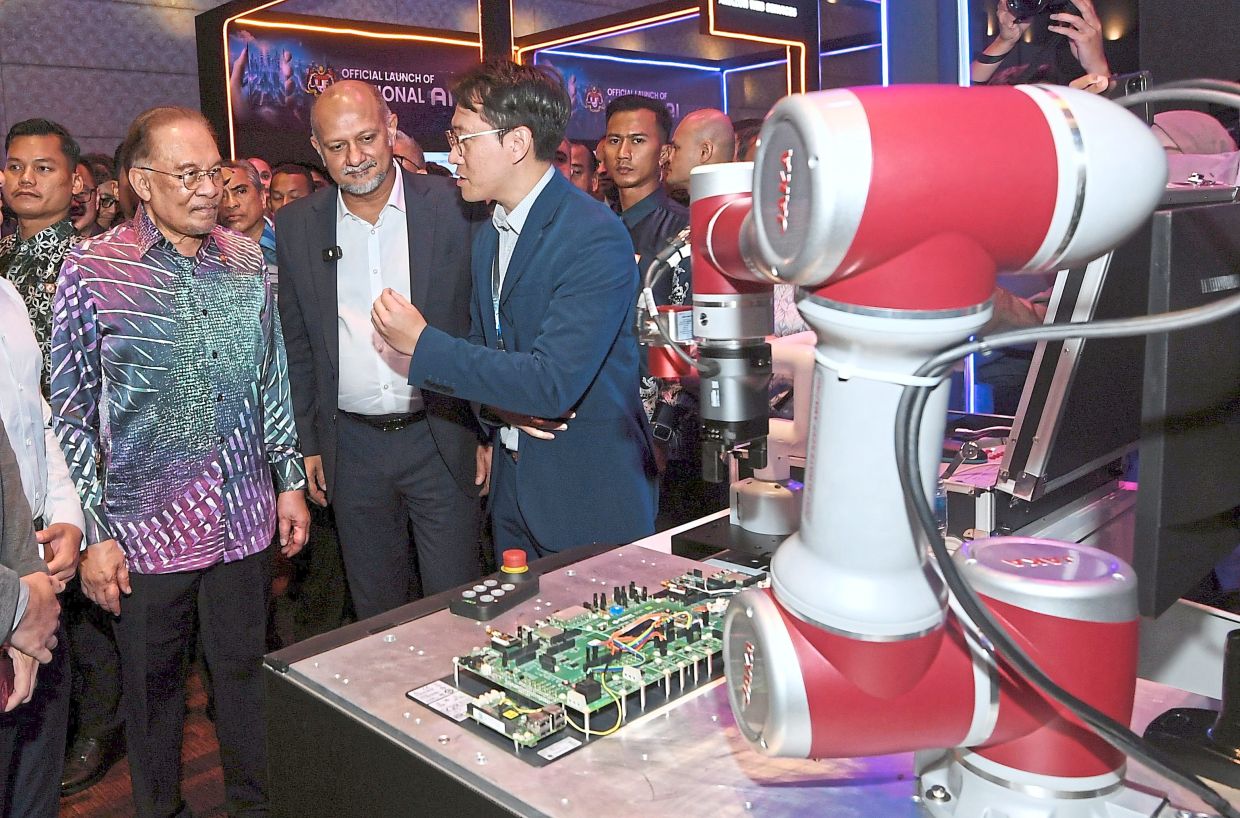

The recently established National AI Office plays a central role in coordinating those efforts across sectors and ministries, Gobind says.

The office is tasked with consulting industries, universities and government agencies to identify sector-specific challenges and formulate national AI policies that can evolve alongside technological change.

He acknowledges that the process remains ongoing and complex.

“Every sector has come back to us with individual challenges. The challenges in different sectors are different,” he says.

Gobind also points to the upcoming Government Innovation Initiative (GII), as an example of how AI and integrated data systems could reshape public administration.

Instead of ministries operating in isolation, the initiative encourages cross-ministry collaboration by using shared datasets and AI-driven solutions to tackle multiple problems simultaneously, he says.